Let’s start building a strong cloud native application architecture, with a powerful cloud native diagram.

A school kid called a cloud computing company. The company executive asked him why he had contacted them. The kid said, “ I want to hire your services.” The executive was excited and also perplexed at the same time as to what services they could offer to a kid. The kid coolly replied, “ I want Homework-as-a-Service.”

- What is Cloud Native Architecture?

- Benefits of a Cloud Native Architecture

- Cloud Native Architecture Patterns

- AWS DevOps Tools for Cloud Native Architecture

- Cloud Native Architecture Diagram

- Cloud Native Architecture Case Studies

- Conclusion of Cloud Native Application Architecture

- FAQs

This blog is also available on DZone; don’t forget to follow us there!

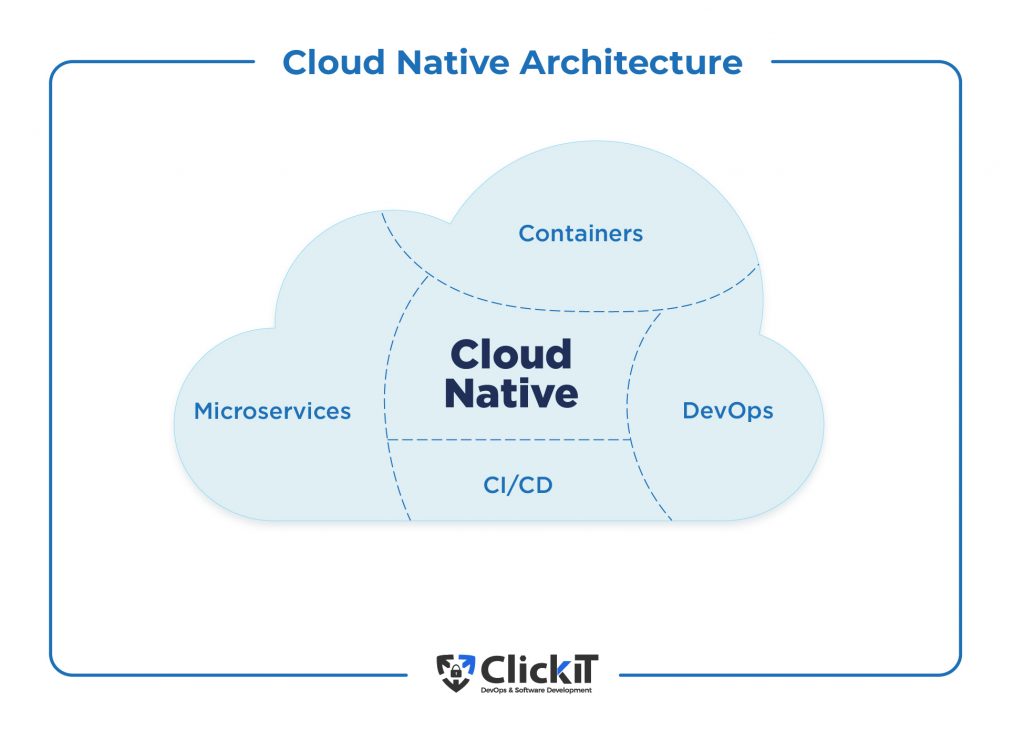

What is Cloud Native Architecture?

Cloud-native architecture is an innovative software development approach that fully leverages the cloud computing model by combining methodologies from cloud services, DevOps practices, and software development principles. It abstracts all IT layers, including networking, servers, data centers, operating systems, and firewalls.

It enables organizations to build applications as loosely coupled services using microservices architecture and run them on dynamically orchestrated platforms. Applications built on the cloud-native application architecture are reliable, deliver scale and performance, and offer faster time to market.

Challenges of Cloud Native Architecture

In the traditional software development environment, developers used the so-called “waterfall” model and monolithic architecture to create software sequentially.

- The designers prepare the product design along with related documents.

- Developers write the code and send it to the testing department.

- The testing team runs various tests to identify errors and gauge the performance of the cloud-native application.

- Developers receive the code back when errors are found

- Once the code successfully passes all the tests, developers deploy it to a test production environment and a live environment.

If you have to update the code or add/remove a feature, you must go through the entire process again. When multiple teams work on the same project, coordinating with each other on code changes is a big challenge. It also limits their use of a single programming language. Moreover, deploying a large software project requires a huge infrastructure setup and an extensive functional testing mechanism. The entire process is inefficient and time-consuming.

To resolve most of these challenges, developers introduced microservices architecture. In this service-oriented architecture, developers create applications as loosely coupled, independent services that can communicate with each other via APIs.

Cloud native app augmented by microservices architecture leverages the highly scalable, flexible and distributed cloud nature to produce customer-centric software products in a continuous delivery environment.

The striking feature of the cloud native architecture is that it allows you to abstract all the infrastructure layers, such as databases, networks, servers, OS, security, etc., enabling you to automate and manage each layer using a script independently. At the same time, you can instantly spin up the required infrastructure using code. As such, developers can focus on adding features to the software and orchestrating the infrastructure instead of worrying about the platform, OS or the runtime environment.

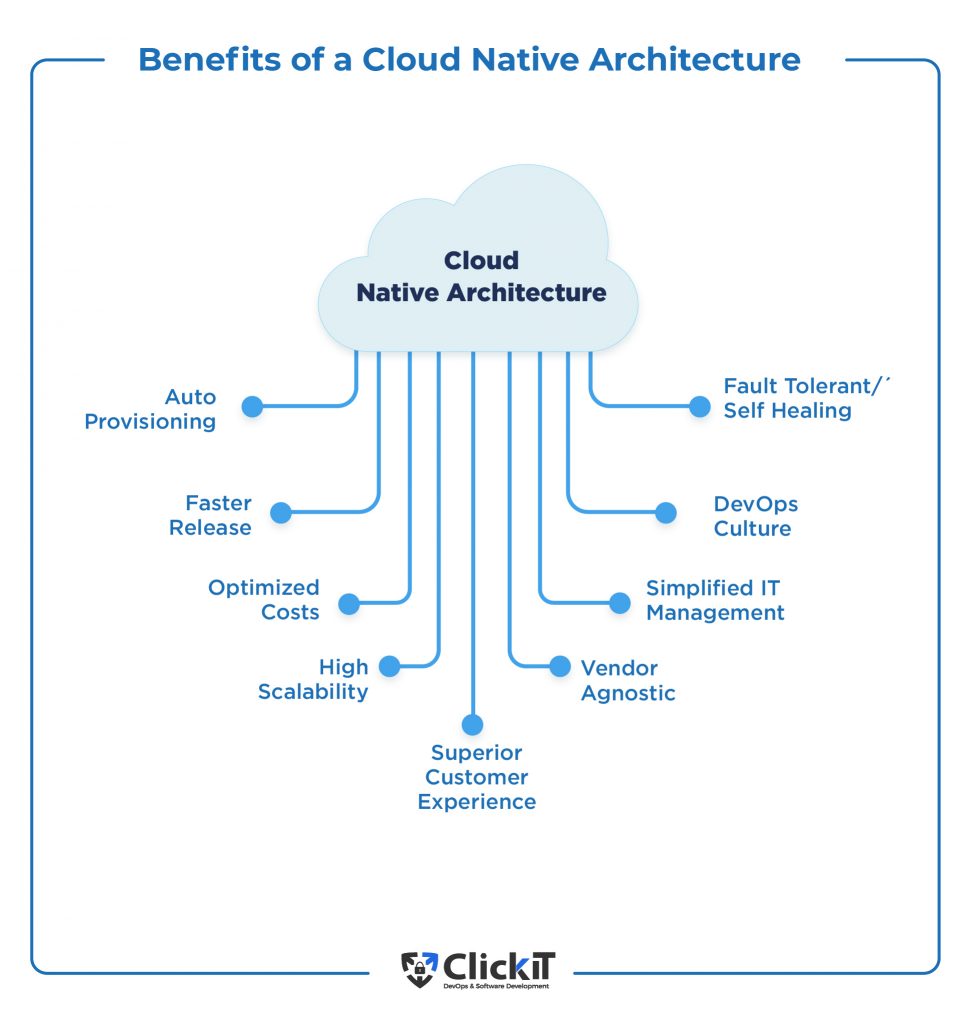

Benefits of a Cloud Native Architecture

The benefits of cloud native architecture are:

- Optimized costs

- Fater Release

- Vendor Agnostic

- DevOps Culture

- High scalability

- Superior customer experience

- Auto-provisioning

- Simplified IT provisioning

Accelerated Software Development Lifecycle (SDLC)

A cloud native application complements a DevOps-based continuous delivery environment with automation embedded across the product lifecycle, bringing speed and quality to the table.Cross-functional teams, consisting of members from design, development, testing, operations, and business, collaborate and work together seamlessly throughout the SDLC.

With automated CI/CD pipelines in the development segment and IaC-based infrastructure in the operations segment working in tandem, there is better control over the entire process which makes the whole system quick, efficient and error-free. They also maintain transparency across the environment. All these elements significantly accelerate the software development lifecycle.

A software development lifecycle (SDLC) refers to various phases involved in developing a software product. A typical SDLC comprises 7 different phases.

- Requirements Gathering / Planning Phase : Gathering information about current problems, businesses requirements, customer requests etc.

- Analysis Phase: Define prototype system requirements, market research for existing prototypes, analyzing customer requirements against proposed prototypes etc.

- Design Phase: Prepare product design, software requirement specification docs, coding guidelines, technology stack, frameworks etc.

- Development Phase: Writing code to build the product as per specification and guidelines documents

- Testing Phase: Developers test the code for errors and bugs, assessing its quality based on the SRS document.

- Deployment Phase: Infrastructure provisioning, software deployment to production environment

- Operations and Maintenance Phase: product maintenance, handling customer issues, monitoring the performance against metrics etc.

Faster Time to Market

Speed and quality of service are two important requirements in today’s rapidly evolving IT world. Cloud native application architecture augmented by DevOps practices helps you to easily build and automate continuous delivery pipelines to deliver software out faster and better. IaC tools make it possible to automate infrastructure provisioning on-demand while allowing you to scale or take down infrastructure on the go. Simplified IT management and better control over the entire product lifecycle accelerate the SDLC, enabling organizations to achieve faster time to market.

DevOps focuses on a customer-centric approach, where teams are responsible for the entire product lifecycle. Consequently, updates and subsequent releases become faster and better. Reduced development time, overproduction, overengineering, and technical debt can also lower overall development costs. Similarly, improved productivity results in increased revenues.

High Availability and Resilience

Modern IT systems have no place for downtimes. If your product undergoes frequent downtimes, you are out of business. By combining a cloud native architecture with Microservices and Kubernetes, you can build resilient and fault-tolerant systems that are self-healing. During downtime, your applications remain available as you can simply isolate the faulty system and run the application by automatically spinning up other systems. As a result, organizations can achieve higher availability, improved customer experience, and uptime.

Low costs

The cloud native application architecture comes with a pay-per-use model meaning that organizations involved only pay for the resources used while hugely benefiting from economies of scale. As CapEx turns into OpEx, businesses can convert their initial investments to acquire development resources. When it comes to OpEx, the cloud-native environment leverages containerization technology managed by open-source Kubernetes software.

There are other cloud native tools available in the market to efficiently manage the system. With serverless architecture, standardization of infrastructure, open-source tools, operation costs come down as well resulting in a lower TCO.

Turns your Apps into APIs

Today, businesses need to deliver customer-engaging apps. Cloud-native environments enable you to connect massive enterprise data with front-end apps using API-based integration. Since every IT resource is in the cloud and uses the API, your application also turns into an API. It not only delivers an engaging customer experience but also allows you to use your legacy infrastructure which extends it into the web and mobile era for your cloud native app.

Take a look at our slideshow to learn the cloud native application habits.

Cloud Native Architecture Patterns

Due to the popularity of cloud native application architecture, several organizations developed different design patterns and best practices to facilitate smoother operations. Here are the key cloud native architecture patterns for cloud architecture diagram:

Pay-as-you-Go

In cloud architecture, centrally host resources and deliver them over the internet using a pay-per-use or pay-as-you-go model. Customers incur charges based on their resource usage. It means you can scale resources as and when required, optimizing resources to the core. It also gives flexibility and choice of services with various rates of payments. For instance, serverless architecture lets you provision resources only when the code runs, ensuring you pay only when your application is active.

Self-service Infrastructure

Infrastructure as a service (IaaS) is a key attribute of a cloud native application architecture. Whether you deploy apps on an elastic, virtual, or shared environment, they automatically realign to match the underlying infrastructure, scaling up and down to accommodate changing workloads.

It means you don’t have to seek and get permission from the server, load balancer or a central management system to create, test or deploy IT resources. While this waiting time is reduced, IT management is simplified.

Managed Services

Cloud architecture allows you to fully leverage cloud managed services in order to efficiently manage the cloud infrastructure, right from migration and configuration to management and maintenance while optimizing time and costs to the core. Treating each service as an independent lifecycle makes it easy to manage them using agile DevOps processes. You can work with multiple CI/CD pipelines simultaneously as well as manage them independently.

For instance, AWS Fargate is a serverless compute engine that lets you build apps without the need to manage servers via a pay-per-usage model.Llambda is another tool for the same purpose. Amazon RDS enables you to build, scale and manage relational databases in the cloud. Cognito is a powerful tool that helps you securely manage user authentication, authorization and management on all cloud apps. With the help of these tools, you can easily set up and manage a cloud development environment with minimal costs and efforts.

Globally Distributed Architecture

Globally distributed architecture is another key component of cloud-native architecture. It allows you to install and manage software across the infrastructure. It is a network of independent components installed at different locations.

These components share messages to work towards achieving a single goal. Distributed systems enable organizations to scale resources massively while giving the end-user the impression of working on a single machine. In these cases, resources such as data, software, or hardware are shared, and a single function runs simultaneously on multiple machines. These systems come with fault tolerance, transparency and high scalability. While client-server architecture was used earlier, modern distributed systems employ multi-tier, three-tier, or peer-to-peer network architectures.

Distributed systems offer unlimited horizontal scaling, fault tolerance and low latency. On the downside, they need intelligent monitoring, data integration and data synchronization. Avoiding network and communication failure is a challenge. The cloud vendor handles the governance, security, engineering, evolution and lifecycle control. It means you don’t have to worry about your cloud-native app’s updates, patches, and compatibility issues.

Resource Optimization

In a traditional data center, organizations have to purchase and install the entire infrastructure beforehand. During peak seasons, the organization has to invest more in the infrastructure. Once the peak season is gone, the newly purchased resources lie idle, wasting your money. With a cloud architecture, you can instantly spin up resources whenever needed and terminate them after use. Moreover, you will be paying only for the resources used. It gives your development teams the luxury of experimenting with new ideas as they don’t have to acquire permanent resources.

Amazon Autoscaling

Autoscaling is a powerful feature of a cloud native architecture that lets you automatically adjust resources to maintain applications at optimal levels. The good thing about autoscaling is that you can abstract each scalable layer and scale specific resources. There are two ways to scale resources. Vertical scaling increases the configuration of the machine to handle the increasing traffic while horizontal scaling adds more machines to scale out resources. Vertical scaling is limited by capacity. Horizontal scaling offers unlimited resources.

For instance, AWS offers horizontal auto-scaling out of the box. Be it Elastic Compute Cloud (EC2) instances, DynamoDB indexes, Elastic Container Service (ECS) containers or Aurora clusters, Amazon monitors and adjusts resources based on a unified scaling policy for each application that you define. You can either define scalable priorities such as cost optimization or high availability or balance both. The Autoscaling feature of AWS is free but you will be paying for the resources that are scaled out.

12-Factor Methodology

To facilitate seamless collaboration between developers working on the same app and efficiently manage the app’s dynamic organic growth over time while minimizing software erosion costs, developers at Heroku developed a 12-factor methodology that helps organizations easily build and deploy apps in a cloud-native application architecture.

The key takeaways of this methodology are that the application should use a single codebase for all deployments, be packed with all dependencies isolated from each other, and have the configuration code separated from the app code.

Processes should be stateless so that you can run, scale, and terminate them separately. Similarly, you should build automated CI/CD pipelines while managing build, release, and run stateless processes individually. Another key recommendation is that the apps should be disposable so you can start, stop, and scale each resource independently.

The 12-factor methodology perfectly suits the cloud architecture. Another essential term from the 12-factor methodology is that you need to have a loosely coupled architecture. And lastly, is that your dev, testing, and production environment should be identical. You could use containers, Docker, and Microservices.

12-factor methodology in Cloud Native

| 12-factor Methodology | Principle | Description |

| 1 | Codebase | Maintain a single codebase for each application that can be used to deploy multiple instances/versions of the same app and track it using a central version control system such as Git. |

| 2 | Dependencies | As a best practice, define all the dependencies of the app, isolate them and package them within the app. Containerization helps here. |

| 3 | Configurations | Build, Release, and Run are the three important components of a software development project. |

| 4 | Backing Services | Log storage should be decoupled from the app. Segregation and compilation of these logs lie in the execution environment. |

| 5 | Build, Release, Run | Build, Release and Run are the three important components of a software development project. |

| 6 | Processes | Run all as a collection of stateless processes so that scaling becomes easy while unintended effects are eliminated. |

| 7 | Port-Binding | While the app contains multiple processes, it is important to run all as a collection of stateless processes so that scaling becomes easy while unintended effects are eliminated. |

| 8 | Concurrency | The app should gracefully dispose of broken resources and instantly replace them, ensuring a fast start-up and shutdown. |

| 9 | Disposability | When applications built on a cloud-native application architecture go down, the app should gracefully dispose of broken resources and instantly replace them, ensuring a fast start-up and shutdown. |

| 10 | Dev / Prod Parity | Minimize differences between development and production environments. Building automated CI/CD pipelines, VCS, backing services and containerization will help you in this regard. |

| 11 | Logs | Minimize differences between development and production environments. Building automated CI/CD pipelines, VCS, backing services, and containerization will help you achieve this. |

| 12 | Admin Processes | Log storage should be decoupled from the app. Segregation and compilation of these logs lie in the execution environment. |

Automation and Infrastructure as Code (IaC)

With containers running on microservices architecture and powered by a modern system design, organizations can achieve speed and agility in business processes. To extend this feature to production environments, businesses are now implementing Infrastructure as Code (IaC). By applying software engineering practices to automate resource provisioning, organizations can manage the infrastructure via configuration files.

With testing and versioning deployments, you can automate deployments to maintain the infrastructure at the desired state. When resource allocation needs to be changed, you can simply define it in the configuration file and automatically apply it to the infrastructure. IaC brings disposable systems into the picture in which you can instantly create, manage and destroy production environments while automating every task. It brings speed and resilience, consistency and accountability while optimizing costs.

The cloud design highly favors automation. You can automate infrastructure management using Terraform or CloudFormation, CI/CD pipelines using Jenkins/Gitlab and autoscale resources with AWS built-in features. A cloud-native architecture enables you to build cloud-agnostic apps which can be deployed to any cloud provider platform.

Automated Recovery

Today, customers expect your applications to always be available. To ensure high availability of all your resources, it is important to have a disaster recovery plan in hand for all services, data resources and infrastructure. Cloud architecture allows you to incorporate resilience into the apps right from the beginning. You can design self-healing applications that can recover data, source code repository and resources instantly.

For instance, IaC tools such as Terraform or CloudFormation allow you to automate the provisioning of the underlying infrastructure in case the system gets crashed. From the provisioning of EC2 instances and VPCs to admin and security policies, you can automate all phases of the disaster recovery workflows. It also helps you to instantly roll back changes made to the infrastructure or recreate instances whenever needed. Similarly, you can roll back changes made to the CI/CD pipelines using CI automation servers such as Jenkins or Gitlab. It means that disaster recovery is quick and cost-effective.

Immutable Infrastructure

Immutable infrastructure or immutable code deployments is a concept of deploying servers in such a way that they cannot be edited or changed. In case a change is required, the server is destroyed, and a new server instance is deployed from a common image repository in that place. Not Every deployment is dependent on a previous one and there are no configuration drifts. As every deployment is time-stamped and versioned, you can roll back to an earlier version if needed.

Immutable infrastructure enables administrators to replace problematic servers easily without disturbing the application. In addition, it makes deployments predictable, simple and consistent across all environments. It also makes testing straightforward. Auto Scaling becomes easy, too. Overall, it improves deployed environments’ reliability, consistency, and efficiency. Docker, Kubernetes, Terraform, and Spinnaker are some of the popular tools that help with immutable infrastructure. Furthermore, implementing the 12-factor methodology principles can also help to maintain an immutable infrastructure.

Cloud Native Architecture on AWS

DevOps complements the cloud-native architecture by providing a success-driven software delivery approach that combines speed, agility, and control. AWS augments this approach by providing the required tools.

Here is a video with the key tools offered by AWS for adopting the cloud native architecture diagram.

Docker and Microservices Architecture

Docker is the most popular containerization platform. It enables organizations to package applications with all the required runtime resources, such as source code, dependencies, and libraries. This open-source container toolkit makes it easy to automate and control the tasks of building, deploying, and managing containers using simple commands and APIs.

Microservices architecture is a software development model that entails building an application, which is a collection of small, loosely coupled, and independently deployable services that communicate with other services via APIs. As such, you can independently build and deploy each process without dependencies on other services, making every service autonomous.

Amazon Elastic Container Service (ECS)

Amazon Elastic Container Service (ECS) is a powerful container orchestration tool to manage a cluster of Amazon EC2 instances. ECS leverages the serverless technology of AWS Fargate to autonomously manage containerization tasks which means you can quickly build and deploy applications instead of spending time on patches, configurations and security policies. It easily integrates with your popular CI/CD tools as well as with AWS native management and compliance solutions. You can pay only for the resources used.

Amazon Kubernetes Service (Amazon EKS)

Amazon Kubernetes Service (EKS) is a containerized orchestration tool for container applications managed by Kubernetes on the AWS cloud. It uses the open-source Kubernetes software, which means you gain more extensibility in managing container environments than Amazon ECS.

Another advantage of EKS is its various tools for managing container clusters. For instance, Helm and Istio help you create deployment templates, while Prometheus, Jaeger, and Grafana help you gain container insights. In addition, Jet-stack serves as a certification manager. It also offers some further service meshes that you don’t get with ECS. EKS also works with Fargate and CloudWatch.

Amazon Fargate

Amazon Fargate is a popular tool from AWS that enables administrators to run container clusters in the cloud without worrying about managing the underlying infrastructure. Fargate works along with ECS and abstracts the containers from the underlying infrastructure, allowing users to manage containers while Fargate takes care of the underlying stack.

Developers specify access policies and parameters while packaging an application into a container and Fargate picks it up and manages the environment. Moreover, It takes care of scaling requirements. You can simultaneously run thousands of containers to manage critical applications easily. Fargate charges are based on the memory and vCPU resources used per container application. It is easy to use and offers better security, but it is less customizable and limited by regional availability.

Serverless Computing

Serverless Computing is a cloud-native model in which developers can write code and deploy applications without managing servers. As the servers are abstracted from the application, the cloud provider handles provisioning, scaling, and server infrastructure management. This means developers can simply build applications and deploy them using containers. In this architecture, resources for applications are launched only when the code is in execution.

When an app is to be launched, an event is triggered, and the required infrastructure is automatically provisioned and terminated once the code stops running. This means users pay only when the code is being executed. So, this point ensures that your Cloud Native Architecture begins to use serverless ecosystems such as Lambda, Amazon API Gateway, Amazon Aurora, etc.

AWS Lambda

AWS Lambda is a popular serverless computing tool that lets you run code without the need to provision and manage servers. Lambda enables developers to upload code as a container image and automatically provisions the underlying stack on an event-based model. Lambda lets you run app code in parallel and scales resources individually for each trigger. So, resource usage is optimized to the core, and the administrative burden becomes zero.

Using Lambda, developers can build serverless mobile and IoT backends, where Amazon API Gateway authenticates API requests. Lambda can be combined with other AWS services to build web applications that can be deployed across multiple locations.

Event-Driven Architect

This architecture complements the serverless architecture, which is as simple as executing a system or isolated services in response to or triggered by events. Automating this process allows you to dramatically automate or reduce your cloud costs. As you can see from principles one to nine, you are abstracting or reducing your IT layers into a single one and trying to reduce many costs from Autoscaling, Microservices Serverless, and Event-driven Architecture.

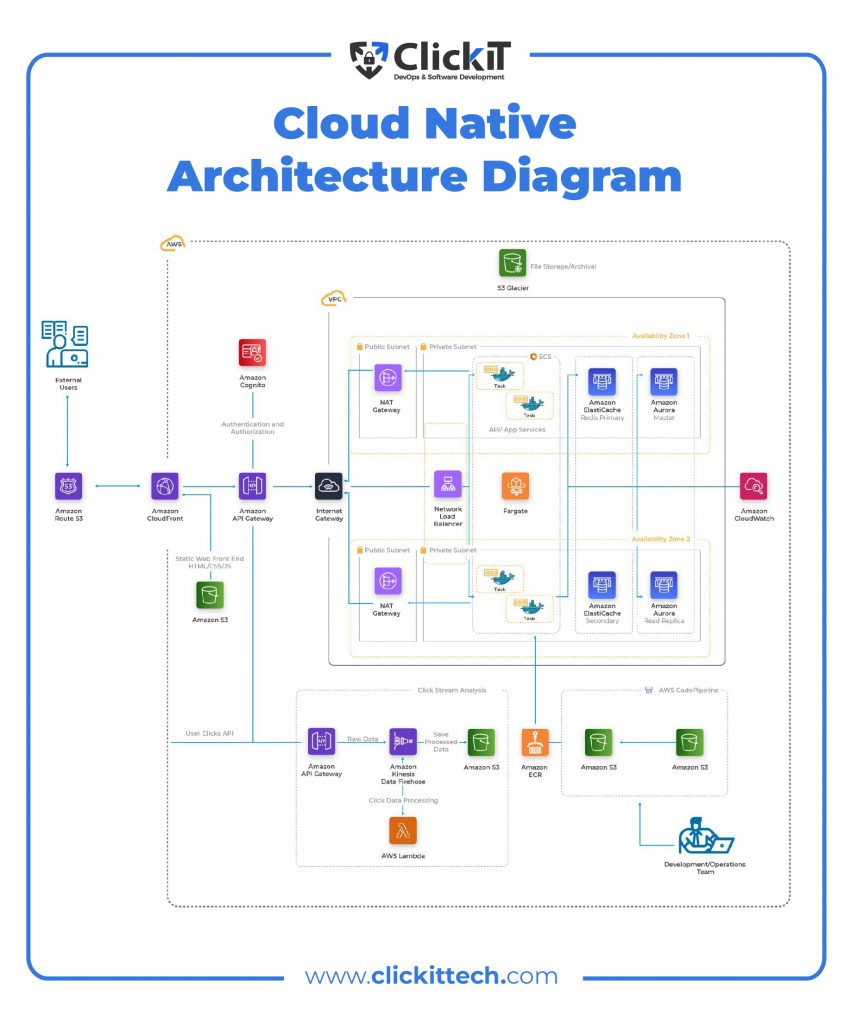

Cloud Native Architecture Diagram

External Users

- External users request access to cloud resources via the Amazon Route 53 DNS Web server.

- The request is sent to the Amazon CloudFront Content Delivery Network (CDN) service.

- As depicted in the cloud native application architecture diagram, Amazon Cognito, a secure sign on and authentication service authenticates user credentials.

- The user data is also sent to clickstream analysis, powered by Amazon Kinesis and AWS Lambda serverless technology and the processed data is stored in Amazon S3 service.

- The traffic is sent to the virtual private cloud via an Internet gateway

- The network load balancer will route the traffic to the available servers.

- External users can access the API / App services powered by Fargate technology as shown in the cloud native architecture diagram.

The Role of a DevOps Team in Cloud Native Architecture Diagram

- The development and operations team uses the AWS CodePipeline.

- They write code and commit to the private Git repositories that are managed by the AWS CodeCommit service.

- The AWS CodeBuild continuous interaction service picks up the code and compiles it into deployable software packages.

- Software that is packaged into containers using CloudFormation templates is uploaded to the Amazon Elastic Container Registry.

- Containers are deployed to the production environment powered by Fargate.

- Amazon S3 Glacier is used for file storage and archival purposes in this cloud native architecture diagram.

- Amazon ElastiCache for Redis is used for in-memory storage and cache for primary and secondary servers.

- Amazon RDS or Amazon Aurora is compatible with PostgreSQL and MySQL is used for relational database services in this cloud native architecture diagram.

- Amazon CloudWatch can be used to monitor applications and infrastructure.

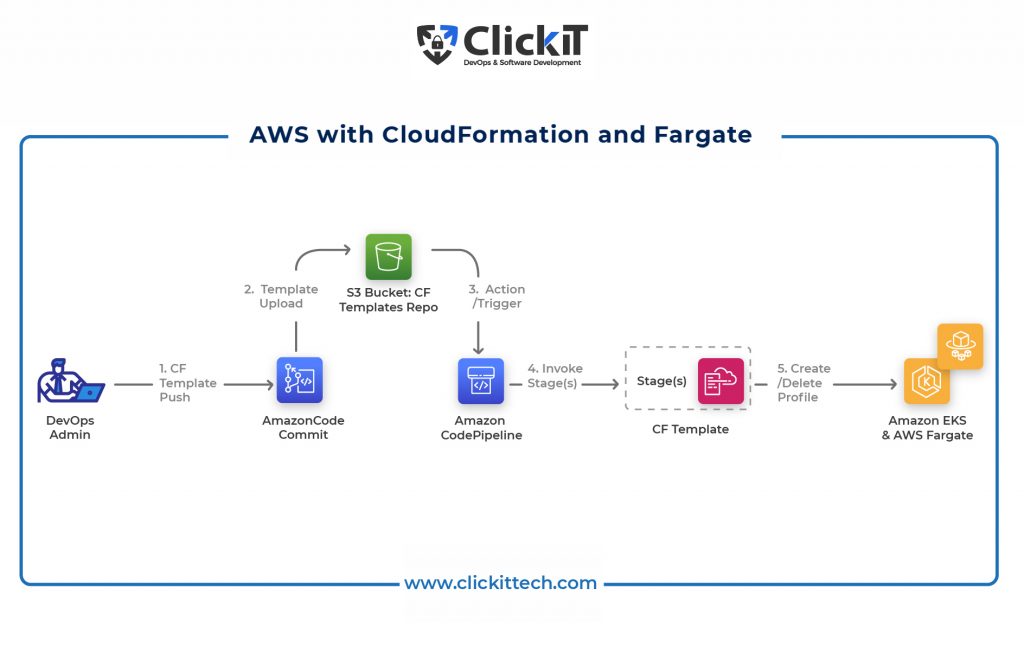

Provisioning AWS resources using CloudFormation and Fargate

Now, in the cloud native architecture diagram, you can also use CloudFormation since it is a powerful IaC tool for provisioning and managing resources on AWS. Fargate is a serverless computing engine that provides the underlying infrastructure for your AWS resources. CloudFormation and Fargate technologies help you seamlessly deploy and manage resources in the AWS cloud.

Here is how you can automatically manage your infrastructure with CloudFormation

- A DevOps admin creates a Fargate profile as a JSON file using the CloudFormation template. The profile file must have a valid EKS cluster name, the logical ID of the profile resource, and a profile property.

- The admin commits the profile to the AWS CodeCommit repository.

- When a change is detected in the CloudFormation template repo, the AWS CodePipeline is triggered, tasks are executed, and the profile is pushed to the deployment.

- The stack is launched, and the EKS service is updated on the changes to the infrastructure.

Using CloudFormation and Fargate, organizations can automatically create and manage new environments during production and development.

Conclusion of Cloud Native Architecture

So, are you Cloud Native or not? If not, what are you waiting for? In today’s rapidly changing technological world, cloud native architecture is no longer optional; it is a necessity. Change is the only constant thing in the cloud, which means your software development environment should be flexible enough to adapt quickly to new technologies and methodologies without disturbing business operations of cloud native architecture diagram.

Cloud native architecture provides the right environment to build applications using tools, technologies, and processes. The key to fully leveraging the cloud revolution is designing the right cloud architecture for your software development requirements. Implementing the right automation in the right areas, making the most of managed services, incorporating DevOps best practices, and applying the best cloud native application architecture patterns are recommended.

Cloud Native Architecture FAQ

Cloud-native products or applications are ones that are created using a cloud native architecture. Simply put, they are born in the cloud. On the contrary, cloud-enabled products are built using traditional methods and are migrated to the cloud.

Kubernetes is a leader in the container orchestration segment. Some of the other tools in this segment include Docker Swarm, Nomad and Apache Mesos.

Cloud Native Computing Foundation (CNCF) is a subsidiary of the Linux Foundation established in 2015. This open-source software foundation comprises a vendor-agnostic developer community that collaborates on open-source projects. By democratizing cloud native architecture patterns, CNCF makes them accessible for everyone. Microsoft, AWS, Google, Oracle, and SAP are some of CNCF’s key members.

The terms ‘cloud-first’ and ‘cloud-only’ are often interchangeably used. However, they are not the same. A cloud-first strategy prioritizes a cloud technology while implementing a new IT infrastructure or platform. A cloud-only strategy moves all systems and services to a cloud-native architecture.

Microservices: Breaking applications into small, independent services.

Containerization: Using containers for consistency and resource efficiency.

Dynamic Orchestration: Managing containers and services dynamically.

CI/CD: Automating the integration and deployment of code changes.

DevOps Culture: Emphasizing collaboration between development and operations teams.